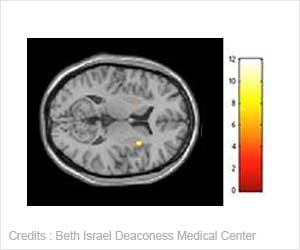

Functional MRI scans of brain entropy may be a new means to understanding human intelligence.

TOP INSIGHT

More variety of nerve circuits used to interpret the surrounding world results in a more versatile processing of information.

"Our study offers the first solid evidence that functional MRI scans of brain entropy are a new means to understanding human intelligence," says study lead investigator Glenn Saxe, MD, a professor in child and adolescent psychiatry at NYU School of Medicine and a member of NYU Langone Health's Neuroscience Institute.

"Human intelligence is so meaningful because it is about the capacity to understand whatever may come, when there is no way beforehand to know what may come," says Saxe. "So, an intelligent brain has to be flexible in the number of possible ways its nerve cells, or neurons, may be rearranged. And that is what entropy is all about."

If further research proves successful, Saxe predicts that fMRI scans of brain entropy could one day help in assessing problems in brain function in people with depression, post-traumatic stress disorder, or autism, in which processing information becomes difficult.

Functional MRI scans use magnetic fields and radio waves to measure subtle changes in blood flow to detect which brain cells and circuits are active or inactive.

Researchers compared hundreds of fMRI scans taken milliseconds apart. The scans revealed the number of possible combinations of electrically active brain cells available to interact with each other in specific regions of the brain.

Scientists next compared their statistical measures of relatively higher or lower entropy with participants' scores on two standard IQ tests: the Shipley-Hartford test, which gauges verbal skills, and the Wechsler test, which assesses problem-solving abilities.

If brain entropy could offer useful insight into intelligence, Saxe proposed, then it should track closely with IQ scores.

People with average intelligence have an IQ score of about 100, Saxe says, with current study participants having an above-average IQ, at 108.

According to Saxe, study participants' entropy scores were strongly tied to IQ. Using standard statistical techniques that were performed two different ways to ensure accuracy, the researchers found that higher entropy was significantly related to the brain regions where previous research has shown it matters most. Entropy scores closely matched IQ scores from the Shipley-Hartford test for the left side of the middle brain (the left inferior temporal lobe), which is tied to learning speech. Similarly, entropy scores tracked closely with those from the Wechsler test for the front region of the brain (bilateral anterior frontal lobes), a known center for organization, planning, and emotional control.

Source-Eurekalert

MEDINDIA

MEDINDIA

Email

Email