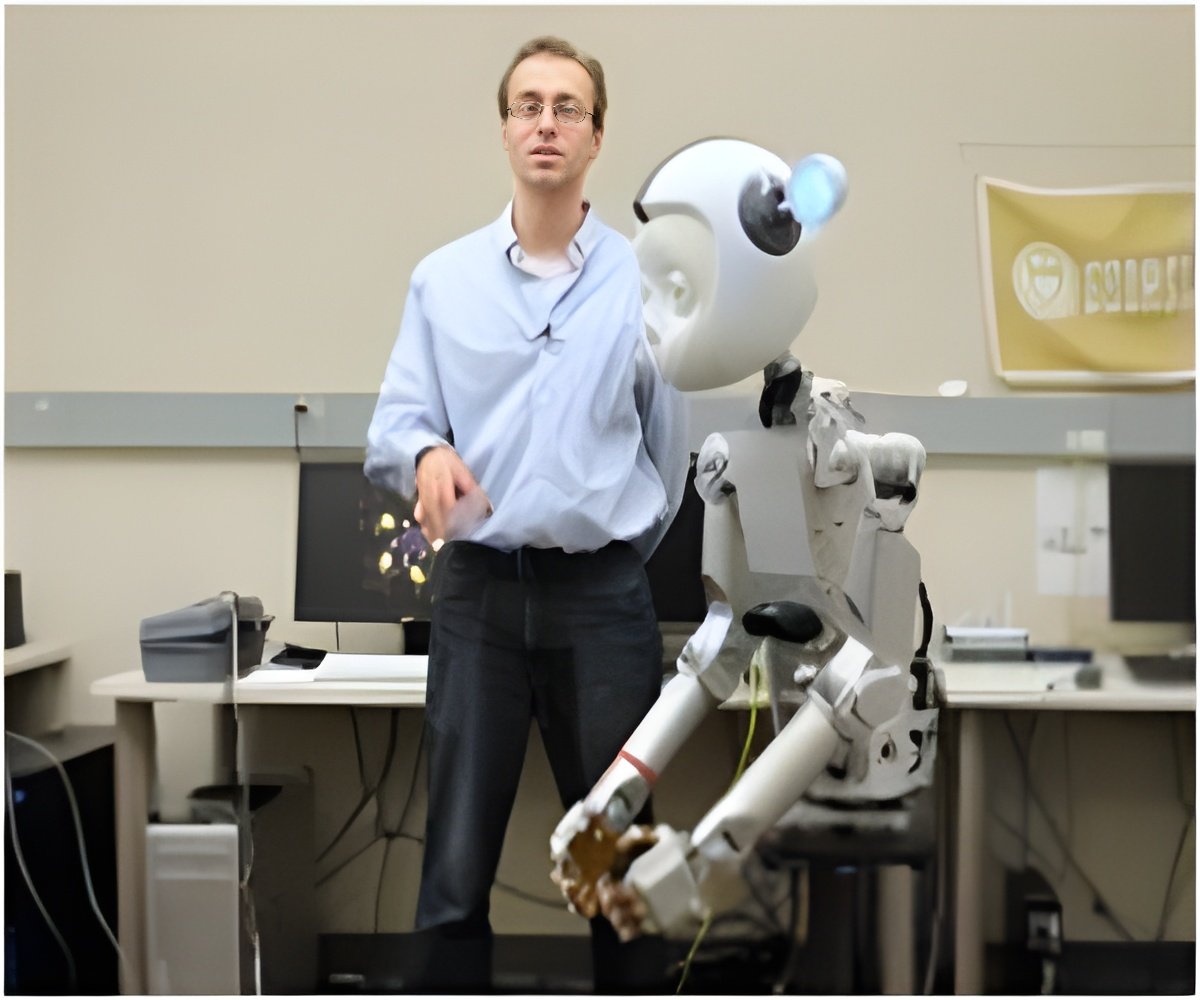

A novel artificial intelligence has been developed that can assist humans in the daily task of putting on clothes.

TOP INSIGHT

In the fast moving world, the task of dressing up in a short span has become quite complex. This newly developed technology is more likely to help humans in the future.

It allowed the character to try the task thousands of times and providing reward or penalty signals when the character tries beneficial or detrimental changes to its policy.

The researchers' method then updates the neural network one step at a time to make the discovered positive changes more likely to occur in the future.

"We've opened the door to a new way of animating multi-step interaction tasks in complex environments using reinforcement learning," said lead author Alexander Clegg, a doctoral student at the Georgia Institute of Technology.

"There is still plenty of work to be done continuing down this path, allowing simulation to provide experience and practice for task training in a virtual world."

With the trained neural network they were able to achieve complex re-enactment of a variety of ways an animated character puts on clothes. Key is incorporating the sense of touch into their framework to overcome the challenges in cloth simulation.

The team will present their work at SIGGRAPH Asia 2018 in Tokyo.

Source-IANS

MEDINDIA

MEDINDIA

Email

Email