The role of Synaptic feedback system in the shaping of learning processes in the brain cortex studied by the scientists of UNIGE.

TOP INSIGHT

Uncovering the role of the synaptic feedback system in the learning processes of the brain provides a pathway for developing suitable and efficient artificial intelligence.

How the whiskers highlight the feedback systems

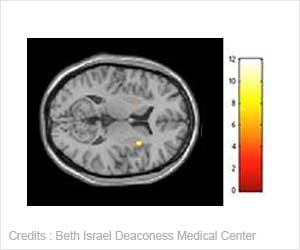

The whiskers on a mouse's snout are specialized in tactile sensing and play a major role in the animal's ability to comprehend aspects of its direct environment. The part of the cortex that processes sensory information from the whiskers continuously optimizes its synapses in order to learn new aspects about the tactile environment. Therefore, it constitutes an interesting model for understanding the role of feedback systems in synaptic learning mechanisms.

The UNIGE scientists isolated a whiskers-related feedback circuit, and used electrodes to measure the electrical activity of neurons in the cortex. They then mimicked the sensory input by stimulating a specific part of the cortex known for processing this information, and, at the same time, used light to control the feedback circuit. "This ex vivo model allowed us to control the feedback independently from the sensory input, which is impossible to do in vivo. However, disconnecting the sensory input from the feedback was essential to understanding how the interplay between the two leads to synaptic strengthening" adds Holtmaat.

Inhibiting neurons gate the information

Now that they have precisely identified which neurons are involved in this mechanism, these scientists will test their results in "real life" to check whether the inhibiting neurons will behave as predicted when a mouse needs to learn new sensory information or when it discovers new aspects in its tactile environment.

How do brain circuits optimize themselves? How can a system teach itself by reading out its own activity? Apart from being relevant to learning in animals, this question is also at the heart of machine learning programs. Indeed, some deep learning specialists try to mimic brain circuits to build artificially intelligent systems. Insights such as provided by the UNIGE team might be relevant for unsupervised learning, a branch of machine learning that occupies itself with circuit models that are able to self-organize and optimize the processing of new information. This is important for the creation of efficient voice or face recognition programs, for example.

Source-Eurekalert

MEDINDIA

MEDINDIA

Email

Email