A demonstration of how complex a visual task or any image consisting of simple and intricate elements, actually is to the brain was shown by a team of neuroscientists.

The researchers published two studies within days of each other to demonstrate the complexity of the tasks.

The findings of the study by Institute for Biological Studies neuroscientists Tatyana Sharpee and John Reynolds take two important steps forward in understanding vision.

Sharpee, an associate professor in the Computational Neurobiology Lab, said that understanding how the brain creates a visual image can help humans whose brains are malfunctioning in various different ways - like people who have lost the ability to see.

She said that one way of solving that problem is to figure out how the brain - not the eye, but the cortex - processes information about the world and if you have that code then you can directly stimulate neurons in the cortex and allow people to see.

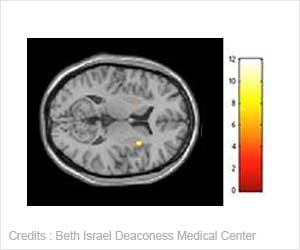

In these studies, the researchers neurobiologists sought to figure out how a part of the visual cortex known as area V4 is able to distinguish between different visual stimuli even as the stimuli move around in space. V4 is responsible for an intermediate step in neural processing of images.

He said that in earlier stages of processing, these windows - known as receptive fields - are small and only have access to info within a restricted region of space.

Both new studies investigated the issue of translation invariance - the ability of a neuron to recognize the same stimulus within its receptive field no matter where it is in space, where it happens to fall within the receptive field.

The Neuron paper looked at translation invariance by analyzing the response of 93 individual neurons in V4 to images of lines and shapes like curves, while the PNAS study looked at responses of V4 neurons to natural scenes full of complex contours.

The Salk researchers found that neurons that respond to more complicated shapes - like the curve in 5 or in a rock - demonstrated decreased translation invariance. "They need that complicated curve to be in a more restricted range for them to detect it and understand its meaning," Reynolds said. "Cells that prefer that complex shape don't yet have the capacity to recognize that shape everywhere."

The findings of the two studies have been published in Neuron and June 24 in the Proceedings of the National Academy of Sciences (PNAS).

Source-ANI

MEDINDIA

MEDINDIA

Email

Email