New software developed by an Indian origin scientist and his colleagues helps you pick out the happiest snaps from a wedding or judge the changing mood of a crowd.

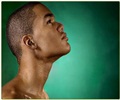

Abhinav Dhall at the Australian National University in Canberra and his team used face tracking software to analyse the smiles of the faces in a group by noting the positions of nine spots on the face such as the corners of the mouth and eyes.

A machine learning algorithm, trained on photos that had been pre-labelled by humans, then used this data to give each face a smile intensity score.

The team also programmed the system to incorporate information from volunteers, who assessed how important the intensity of any individual's smile was to the overall mood score of a photo.

Those who were standing near the centre of a picture were given a stronger weighting, for example, while partially obscured faces were less influential. When asked to gauge the happiness level of a photo, the system only deviated from the opinion of a human by around 7 per cent.

Dhall said that the aim is to be able to assess the overall mood of a group from a single shot.

"If the mood score goes down over the time, we can assume that the group are getting angry," said Dhall.

The software was presented at the Conference on Multimedia Retrieval in Dallas, Texas, last week.

Source-ANI

MEDINDIA

MEDINDIA

Email

Email