‘Different languages have similar neural signatures for describing events and scenes.’

Tweet it Now

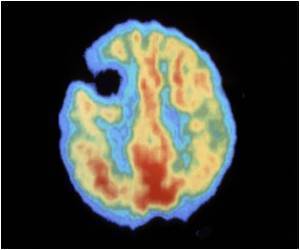

"This tells us that, for the most part, the language we happen to learn to speak does not change the organization of the brain," said Marcel Just, the D.O. Hebb University Professor of Psychology and pioneer in using brain imaging and machine-learning techniques to identify how the brain deciphers thoughts and concepts. "Semantic information is represented in the same place in the brain and the same pattern of intensities for everyone. Knowing this means that brain to brain or brain to computer interfaces can probably be the same for speakers of all languages," Just said.

For the study, 15 native Portuguese speakers -- eight were bilingual in Portuguese and English -- read 60 sentences in Portuguese while in a functional magnetic resonance imaging (fMRI) scanner. A CMU-developed computational model was able to predict which sentences the participants were reading in Portuguese, based only on activation patterns.

The computational model uses a set of 42 concept-level semantic features and six markers of the concepts' roles in the sentence, such as agent or action, to identify brain activation patterns in English.

With 67 percent accuracy, the model predicted which sentences were read in Portuguese. The resulting brain images showed that the activation patterns for the 60 sentences were in the same brain locations and at similar intensity levels for both English and Portuguese sentences.

Advertisement

"The cross-language prediction model captured the conceptual gist of the described event or state in the sentences, rather than depending on particular language idiosyncrasies. It demonstrated a meta-language prediction capability from neural signals across people, languages and bilingual status," said Ying Yang, a postdoctoral associate in psychology at CMU and first author of the study.

Advertisement

Source-Eurekalert