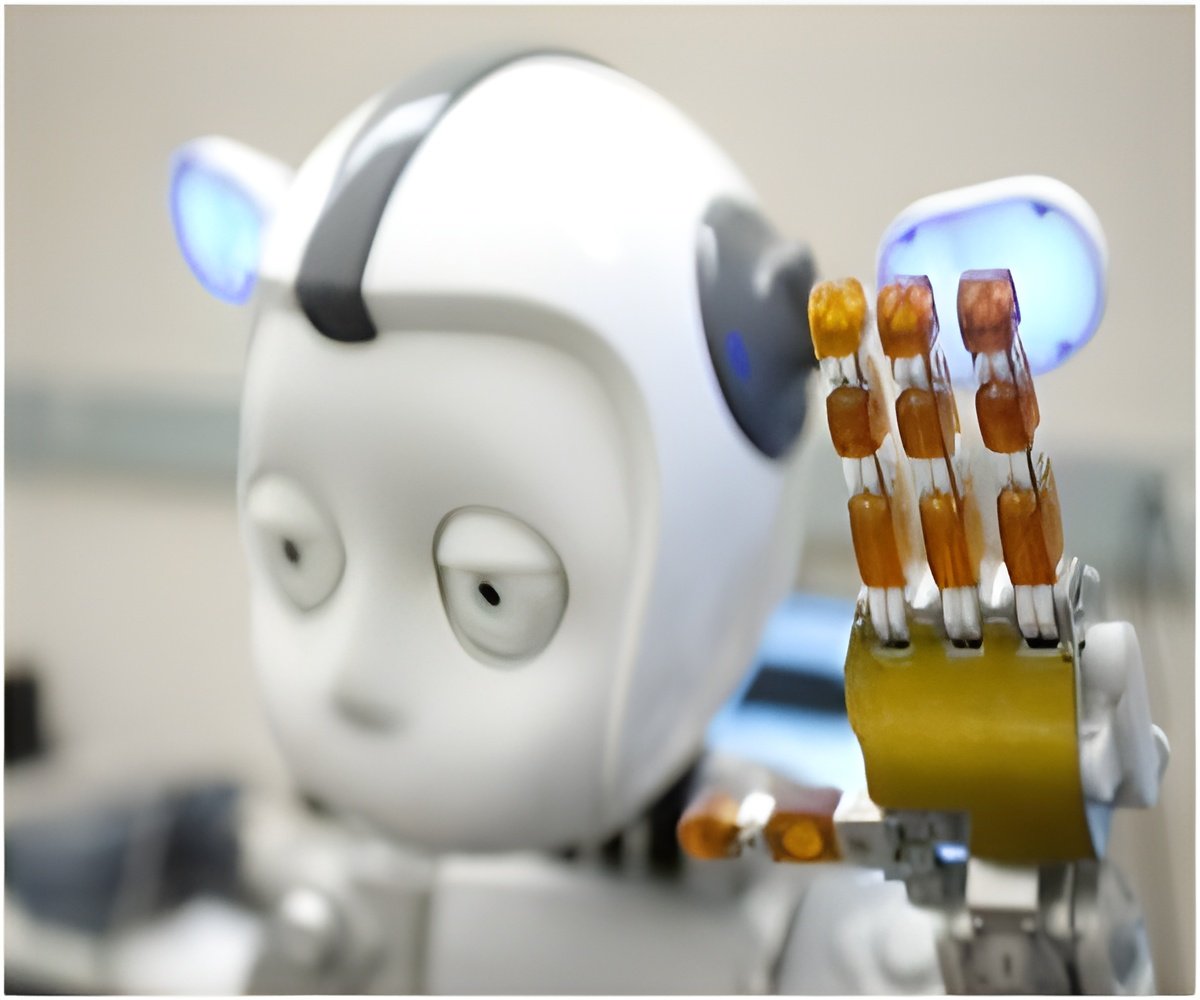

Many studies have found that children including those with autism were attracted by robots. This motivated the scientists to integrate robots with an adaptive intelligence system and use the set up to train autistic children in gaining joint attention, which is coordinating attention with other people or objects.

Researchers designed the system ARIA (Adaptive Robot-Mediated Intervention Architecture), which adapted itself to meet the child’s training requirement. Scientists set up a room with flat panel displays attached to sidewalls. In the front, a robot was made to stand on a table facing the child who was seated in a chair. The head movements of the child were tracked by cameras attached to the walls and from LEDs attached to a cap worn by the child.

NAO was programmed with a training protocol involving a series of gestures and verbal instructions similar to that given by human therapists. The training begins by asking the child to look an image or video on the display panel. Verbal prompts were combined with gestures if the child did not respond.

A study involving 12 children was conducted to test the set up. Six of them were autistic and the others were in the control group. Both the groups attended training session given by both the robot and human therapist.

It was noted that both the groups were attentive for a longer time to training sessions given by robots. The attention span was low among autistic children when compared to control group while the training was given by therapist.

Advertisement

The study and its findings are reported in IEEE Transactions on Neural Systems and Rehabilitation Engineering.

Advertisement