‘By automatically animating an articulatory talking head in real time from ultrasound images, speech therapy can be made easy.’

Tweet it Now

As well as the face and lips, this avatar shows the tongue, palate and teeth, which are usually hidden inside the vocal tract. This "visual biofeedback" system, which ought to be easier to understand and therefore should produce better correction of pronunciation, could be used for speech therapy and for learning foreign languages. For a person with an articulation disorder, speech therapy partly uses repetition exercises: the practitioner qualitatively analyzes the patient's pronunciations and orally explains, using drawings, how to place articulators, particularly the tongue: something patients are generally unaware of.

How effective therapy is depends on how well the patient can integrate what they are told. It is at this stage that "visual biofeedback" systems can help. They let patients see their articulatory movements in real time, and in particular how their tongues move, so that they are aware of these movements and can correct pronunciation problems faster.

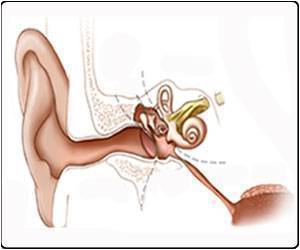

For several years, researchers have been using ultrasound to design biofeedback systems. The image of the tongue is obtained by placing under the jaw a probe similar to that used conventionally to look at a heart or fetus. This image is sometimes deemed to be difficult for a patient to use because it is not very good quality and does not provide any information on the location of the palate and teeth.

In this new work, the present team of researchers propose to improve this visual feedback by automatically animating an articulatory talking head in real time from ultrasound images. This virtual clone of a real speaker, in development for many years at the GIPSA-Lab, produces a contextualized and therefore more natural visualization of articulatory movements.

Advertisement

The algorithm exploits a probabilistic model based on a large articulatory database acquired from an "expert" speaker capable of pronouncing all of the sounds in one or more languages. This model is automatically adapted to the morphology of each new user, over the course of a short system calibration phase, during which the patient must pronounce a few phrases.

Advertisement

Source-Eurekalert